Being Blind

Being Blind

A Polemical Manual for Using New Audiovisual Technologies

published in Iris No.27 - special issue on the state of sound studies, Paris, 1999Drawing the line

Forget everyone from Kraucauer to Bazin to Metz to Mulvey and back again. When scrutinized for their audiovisual nous, the modernist pedagogy of film theoreticians as validated in countless Film Studies courses forms a large grey sound-absorbing blanket. Useful in regard to certain, fixed and/or limited critical ideologies, the acknowledged brethren of film theoreticians rarely accounts for the dimensional totality of the cinematic experience. For as those 'images flicker on the screen', sound is the great modulator: it allows image to be perceived sans-sound to the critically deaf. To those who care to seriously ponder how frequency, timbre, reverberation and volume contribute to the cinematic experience, sound's capacity to modulate its mechanical viscera posits it at the core of audiovisuality.

Yet it is tiresome to make such statements after nearly a century of audiovisual technologies predicated on the reproduction (as act, process and object) of synchronized images and sounds. And it is downright boring to pry sound from the cinema and pedantically qualify its difference to image, thereby giving further credence to image as prime governor of hierarchical order in the medium of film. There is no mistaking that sound is material in all manner of manifestation. It is physical, voluminous, encompassing, sexual. More a shock wave hurtling one into phenomenology than a route that guides one to ontology, the film soundtrack has still yet to be adequately catalogued - let alone theorized - as an audiovisual narration of spatialized dynamics. As far as I can remember, I have never been impressed by 'the screen' and its 'flickering images'. I float in the surround sound of the theatre's auditorium as its visuals move in concert with the sonorum of a movie. It never makes any sense to not talk about sound at every moment of cinematic inquiry.

What follows is a brief and condensed non-linear listing of issues related to the design and usage of the sound components of computer/digital/online audiovisual technologies. While these technologies continue to rejuvenate old hippies, excite young cyberpunks and bolster business lemmings, the past decade of hyperbole (a clanging collapse of false advertising, evangelistic proclamation and corporate projection) has demonstrated scant critical awareness of the complex and compound effects which result from sound-image fusions - and zero comprehension of the preceding century of inventions and conventions in linked areas. This marks contemporary techno-rhetoric as severely lacking when viewed (as we shall) from the perspectives of how one uses these technologies both to produce and consume information, and what results from the new forms of synchronism and syncretism which develop out of the technical shortcomings and wild futuristic claims of those same technologies. If 'information is the new commodity', technology accordingly behaves as no more than new packaging design. Like the cellophane wrapping of cigarette packets in the 50s to keep them fresh, faster processors, higher bandwidth and RAM expansion are the 90s equivalent of such phantom improvements in consumer delivery. And just as modernist film theory waffled behind a dense blanket of aural imperception, so does technological theory gabble from a haze of presumed postmodern conjecture.

Defining the interface

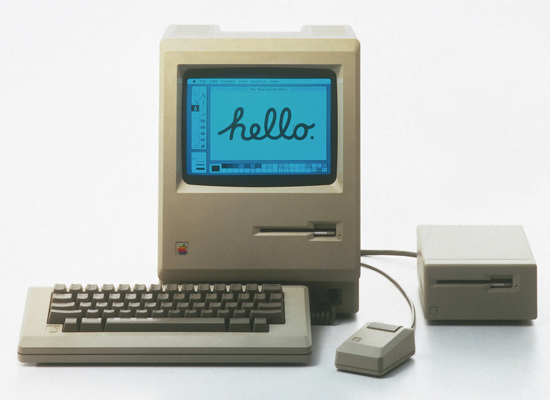

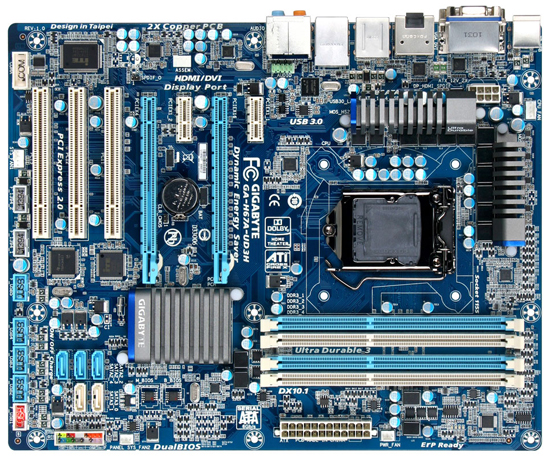

All technology derives from a desire to extend the body in one way or another. Never without sophistication (as the body is far from a simple construct), technologies reflect body consciousness in expanded and contracted form. The visual primacy in the design of most audiovisual technologies - especially their interface - stands as an inditement of how it is presumed we interpret information and experience audiovisuality. Picture the computer: its window onto its world is the monitor. The screen for your gaze; the cube levelled ergonomically at eye level. Part Vitagraph, part ECG unit, it occupies centre stage of ocular activity. To its side, or underneath it, resides the actual computer - its CPU, RAM cache, processors, cards, drives and so on. The monitor specifically allows you to see an optical version of what happens inside the computer. And there resting at your fingertips, the keyboard and mouse: a navigational map for our digits, abstractly recoded into the alphanumerical (the keyboard) and the spatial (the mouse).

Computer design from the outset foresaw little need for the sonic. Interface design was strictly a matter of hand-eye co-ordination. Speakers were - and still are - tucked into the preformed skirt of the monitor, mimicking the early 60s revolution in compact/portable TVs. Accidentally yet revealingly, the computer monitor accrues its status from the portable/domestic era when radios became mobile battery operated transistors, and the family television - itself an optical mutation of the earlier radiogram nestled with glowing tubes in place of the living room hearth - shrunk so as to be multiplied and relocatable via the newly roving passage ways of the suburban household. The Personal Computer (PC) was designed as an object of consumer demand: something that would go or be where you wanted it to be. As with the TV on the bench in the kitchen, so too the computer on the desk in the den.

Despite its relocatability, the monitor fixes you in position to its projected data. Like the beamed trajectories of the pupil, it aims itself at you, thus binding your line of vision to its central zone and dispelling any awareness of one's surroundings. Peripheral vision fades as a lack of luminousness fails to trigger any neural response outside of the monitor's glow, and all sense of space is sucked into a vortex of screen data. An occasional beep, squawk, tinkle or 2k orchestral sample will prick your ears, but as with most sonic inventions designed to communicate (as opposed to those designed to broadcast, relay and/or reproduce information) the priority is to alert one to impending danger. Fidelity is of no concern under such a mandate. It is also important to note that the computer monitor is designed to direct one's usage of it through a visual logic at the erasure of any aural logic. In other words, the reliance on beamed/focussed/directed data relates to our optical mechanics (experiencing selected vision through the act of focussing) while suppressing external and extraneous spatial sensations which relate to our aural mechanics (experiencing sound waves by being situated within their spatial dispersion).

30 years of computer design are locked into this template. Recent slight deviations - like the ostentatious Anniversary Mac of 1997 and its detached sub woofer - are propelled more by chic design considerations than any sense of an immersive audiovisuality. The proliferation of NuBus and PCI cards within the personal computer does not occur until well into 90s. Particularly the widespread demand for aptly-named 'Sound Blaster' cards centred on the need to transform the PC into a processing environment that no longer suppressed or denied the fact that sound had become a dimensional engulfing domain which has always run counter to the narrow projectile nature of image. Typically, the expansion of the sonic happens unseen, buried inside the architecture of the hard drive, cued only by the addition of tiny desk speakers.

Playing the work station

The initial domestication of the PC redressed the spooky 60s hangover of computers being supreme machines that would replace all humans. Like movies audience-tested by focus groups in shopping malls, the domesticated PC gives the dumb what they want while making them feel their cerebral capacity is increased through an act of consumption. As the 'user-friendly' computer became more integrated into the home environment, it was crucial in blurring distinctions between work and leisure, between production and consumption.

This is most noticeable in computer games, which were transmitted like a germ from the arcade (a pseudo-social reconstruction of the carnivalesque promenades at the turn of the century) into the home along the migratory pathway of the PC. The 'video game console' (as it originally connected to one's TV monitor) and the PC throughout the 70s merged as they collectively shaped the emerging 'home entertainment system'. Interestingly, the term 'work station' develops in opposition to the increasing use of PCs or game consoles (like the ubiquitous Sony Play Station) primarily as an entertainment centre. Throughout the 80s, the audiovisual landscape of the home - a bastion of leisure and distraction - had not only been transformed yet again, but was redefined as a fluid set of possibilities for screen-based activities. The TV monitor became the battle ground for contestation between ether-broadcast and cable-subscribed content. Through large screen formats and projectors (connected to the Hi-Fi system normally reserved for music alone) it also became a transmogrified cinema. FM-Radio shifted from tinny transistor reception to full-frequency Hi-Fi reception. Concurrently, aural fidelity became the prime force in expanding the experiential factors of one's consumption of these leisure technologies: from Hi-Fi tracks on VHS video tapes to Dolby Surround sound decoder-amplifiers to CD players to auxiliary sub woofers to bombastic sound effects in video/computer games.

By the late 80s - and primarily due to an insatiable appetite for expansive/immersive home entertainment - all audiovisual technologies had increased their aural fidelity at a drastic exponential rate in comparison to what amounted to a decade of stunted visual development. The 80s thus can be marked as a crucial epoch in the advancement of the sonic after it had been halted for so many years during which design and invention was concentrated on components for visual/motion reproduction.

Digitizing the data

While much was - and still is - made of 'the digital revolution', it is too often forgotten that sound preceded image in this supposed revolution. To be correct, the digital era arrives in the 70s with computers though the binary encoding of numerical values into a series of 0s and 1s or 'on/off' pulses. The true meaning of digital lies in the complex algorithmic computations which can be construed from a base binary language. But for most people, 'digital' connotes increased fidelity, high 'quality' and a consequent surge of simulation due to heightened mimetic appearances. Yet while the early 80s saw the rise of postmodern theory and its celebration of the simulacra, there were at the time no concurrent visual technologies which offered evidence of exactly how media reproduction in the 80s was different from the McLuhanesque electronic 60s.

Clearly, postmodern theory was not listening. The advent of professional studio samplers (digital encoders of analogue audio signals into 'wave samples') in 1982 generated the effects of aural simulation which to this day visual technologies have failed to achieve - especially in regards to the dissolution of granular density in reproduction and the redefinition of 'surface noise'. Certainly there have been numerous advances in visual technologies of reproduction over the last two decades (hi-res scanners, laser printers, desk-top publishing software, digital image bureaus, plus an array of animation, matting, compositing, and rendering applications for professional and domestic computer work stations) - but to the informed eye, all define their status as obviously as the rubber of the original Godzilla suits. On the other hand, a 44.1khz audio sample to this day lives up to the old Memorex lie: is it real or is it 'taped'? Sampling set the agenda for the return of phenomenological inquiry. It decimated McLuhan's notion of medium-based transference and alteration, for a sample simulates not through reconstitution and evocation, but through mirroring its input, thus forming an ontological loop which short circuits attempts to differentiate the original signal/source from its reproduced event.

By 1986, the bulk of the recording industry and the sound post-production arm of the film industry had been radically transformed (just as desktop publishing had similarly redefined the relationship between graphic designer and printer during the same period). Along with standardized synch-pulse systems like MIDI (Musical Instrument Digital Interface - for sequencing and triggering samples) and SMPTE Time Code (for electronically synchronizing striped video tapes to both analogue multi-track recorders and digital sampling/sequencing work stations), the binary encoding, manipulation, decoding, ordering and replaying of digitized audio data furthered the algorithmic and computative parameters of 'being digital' and to an extent set the mathematical agenda for the computer animation boom of the 90s.

Re-inventing the digital

But not only were new means of production developed: new means of storage and retrieval broadened the digital realm throughout the 80s. The introduction of laser discs (LDs) at the start of the 80s quickly rivalled the electro-magnetic medium of VHS video. While LDs took off first in Japan then later in America (aided by both countries sharing the NTSC television system), the later introduction of CDs ('compact discs', as in smaller versions of the initial laser discs) in 1986 capitalized on a side application of the laser medium. Consequently, the CD medium wholly revolutionized the recording industry - to a far greater extent than LDs were able to replace videos. This shift from an audiovisual technology back to an audio medium is a telling reflux of invention: the aural and the sonic so often provide the fertile ground for materially extending and defining the actuality of an audiovisual medium, in opposition to the potentiality which persistently qualifies so many visual inventions.

CDs - that is, the 12cm discs for storing digitized data of any form - have for the past decade become a shimmering medium for various applications. It has been the basis first for music recordings, and then CD-ROM programmes: so-called 'multimedia' forms using rudimentary 'interactive' navigational structures to move one through hyper-lo-fi images, animations and sounds. While memory restrictions limited the development of CD-ROMs (not to mention a sacrifice in audiovisual and post-structuralist imagination for the fetishization of a specious 'interactivity'), CDs now constitute the storage medium for digital cameras (with discs replacing negative emulsion), DVDs - Digital Video Discs - and just about any data that can be 'burnt' onto the now-affordable CD burners. This is a strong example of the amoeba-like nature of all storage media and the reproduction technologies they can contain. Nothing is really superseded as if invention follows a linear conveyor belt - although market advertising and journalistic histories convey such a sense of progression.

The 90s has witnessed a return of the visual trumpeted by the most hollow claims. In reverse proportion to the advances in both the production and consumption of aural phenomenae of the preceding decade, visual invention in the 90s is best represented by 'Quicktime movies' (QTMs): thumbnail lo-resolution pixilated motion-captures which move in jerky freeze-frame/jump-cut time. Hand-cranked Nickelodeons generate a higher degree of verisimility. Yet, a perverse visual logic governs this phase in audiovisual evolution, as once again the main attraction of QTMs is that they bring motion image effects to the computer monitor, thereby returning the PC monitor into an embryonic form of the video monitor connected to the family TV set. By the mid-90s, you could watch 'movies' on your computer - with extreme limitations in quality and duration, and with the audio fidelity of a phone answering machine. Following the explosion of compression software over the last five years, DVDs not only resurrected the CD format as an entertainment-based storage/retrieval medium, but also completed the cycle begun by QTMs. Now you can watch DVDs at a suitable resolution occupying a full screen with passable audio. The power of the PC monitor has returned.

Loving the software

As stated earlier, the monitor is a window to its own world. The same world, sitting on a billion desks in a billions dens and offices. At the same time that office workers marvelled at QTMs and took family snapshots with digital cameras, Microsoft implied a McLuhanesque travelogue and asked "Where do you want to go to today?". As if you were going anywhere except back into the pre-designed parameter-heavy but user-friendly world of your central processor unit (CPU - the 'brain' at the 'heart' of your computer's 'nervous system') and hard drive (HD).

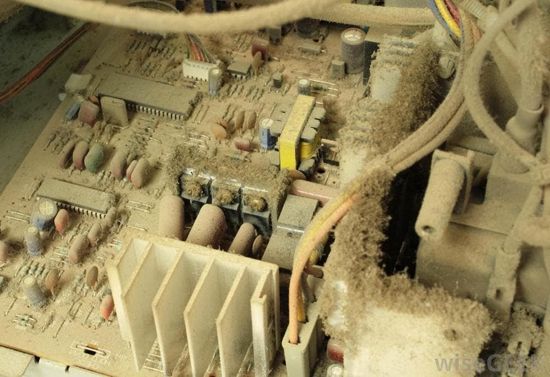

The 90s has witnessed the congealing of all the fluid possibilities of the nomadic home environment and its myriad of mutated audiovisual technologies into the computer work station. Far from surging forth into the next millennia, work station improvements are designed to give more power to the work station as an edifice which increases your dependency on its spiralling and shrinking inadequacies. Like a reverse recording of a single cell splitting and multiplying, the computer work station has attracted all 'peripherals' to its CPU: CD burners, CDR drives, externals HDs, Zip/Jazz drives, scanners, expansion cards, modems. In all likelihood - and in absolute contradiction to every nomadic decentred ethic which intones techno-rhetoric as a postmoderm dialect - the design of computers has fostered a monolithic centralization of its power. And while angst is still wrought over the 'abuse' of the computer as a 'play station', computer games - like all leisure activities - are at the ultimate service of increasing work productivity through the fetishization of speed. Exciting children with computer games and donating computers to educational institutions is a canny and effective way of instigating and maintaining a reliance on computers in the work environment.

The computer - its monitor, its work station, its object-design - appears capable of doing anything you want. It seemingly has the capacity to know what you are thinking, guess what you want, and grant your wishes. This feat - a digital era equivalent of the old gipsy woman who reads your fortune - is achieved through software. While the computer's CPU is essentially a blank brain (despite the ideological and philosophical biases implicit in its binary processing design), software functions as a series of 'personality plug-ins'. Once cued to operating, behaving and functioning in a computer-friendly mode, you will undoubtedly find software that will produce results that either you had previously thought impossible (due to your own limited thinking) or you find thrilling to have produced yourself (the grand self-empowerment of pulling down a few menus and clicking a mouse a few times). In fact, this hazy heady ego-trip which the computer and its amazing technicolour software sends you on is largely responsible for the inaccurate, imperceptive and ill-founded claims as to what it can do.

The merger between consumption and production is on the one hand a social reality, but on the other a technological lie. The PC - as a domestic instrument of control - will always come up against limitations which separate it from the professional domain of dedicated work stations which have specific aims for their technological usage. In fact, so much software is essentially the modification of a set of tasks and operations which previously existed in dedicated work stations which used their own proprietary coding and processing language to enact their tasks. Drum machines & sequencers, sampling wave form editors, effects processors, FM synthesizers, HD & non-linear multi-track recorders, non-linear vision editors - all pre-existed as custom stand-alone work stations designed in forms quite distinct from the monitor-HD configuration of the PC as we now know it. (Plus, the digital revolution in sound/music happened with 'play station' applications - Atari, Amiga, etc. - well before their PC developments.) These 'stand-alones' produced by a myriad of non-computer companies like Roland, Yamaha, Ensoniq, Avid and countless others were always designed to carry through a technological act to its conclusion. In audio, this meant 'mastering'; in soundtrack post-production, 'synchronizing'; in animation, 'rendering'; and in vision editing, 'outputting'. Their processing capabilities mostly foresaw that issues of hi-fidelity effect and surface eventually had to be proven by the final media's containment and convincing generation of those effects/surfaces, and the work station environment was simply the intermediary zone for producing the work prior to its 'publishing' or presentation in an audio, visual or audiovisual medium. The PC simply mimics these pre-established effects - always at a lower resolution and fidelity, via reduced options for modulation and modification, at speeds proportionate to the simplicity of those options, and with severely limited means for outputting to a non-PC medium (eg. video, film, large-scale print formats, etc.). Granted, the PC has certainly 'revolutionized' the domestic front - but only through affording many a non-professional the multimedia thrill of being a 'virtual professional': from bank clerks animating slick graph charts of their superannuation funds to pimply kids recreating the big-screen fly-by of the Death Star from STAR WARS.

Burning the bible

This scattergun overview of computer/digital/online audiovisual technologies is intent on making one point clear: despite whatever new era 'digital evangelists' proclaim we now live in, the aural aura resulting from PC software applications is retrograde, inferior and unacceptable. From scratchy samples on a HD to the inane looping of memory-friendly sound bites on CDRs to fractured online transmissions like audio-streaming and Real Audio plug-ins to basic wave sample transformations on software like Sound Edit 16 - the end results are akin to the early musique concrete experiments of Pierre Schaeffer and Pierre Henri at the start of the 50s. But whereas musique concrete deliriously followed the collapse of the 'negativity' of noise into the expanded abstracted non-judgemental field of sound, audio in PCs (especially CDR and online manifestations) is confined to diminishing effects wherein it is intended that we interpret processing as a means of production. I do not care how a CPU does anything on my computer: if it sounds like a cassette tape played through a telephone receiver, I'm not listening.

This is not to say that new audiovisual technologies are bankrupt per se. The genuine innovations in the overall evolution of audiovisual developments in the digital domain lie firstly in the tension between the conscious experiential states which define the phenomenological arcs of seeing and hearing, and secondly in the disjuncture between visual desire and aural delivery. Therein we can discover the crucial factors which determine audiovisuality - modulation, spatialization, synchronicity, temporality, etc. - and which technologies only ever replicate, simulate and generate, but never predate or even actuate. It must not be forgotten that sound pre-exists its recording, and that all recordings return to sound. In this sense - especially so in an ongoing age of technological acceleration - the medium is not the massage, but merely its passage from the corpus to aura, from material to matter.

Time line

A rough guide to the chronological layering of audio components in audiovisual technologies. Dates refer to the wider proliferation of a format rather than the moment of its invention. Each format/medium of course underwent a series of sub-transformations, media-mergers and unexpected modifications, some spanning many years past the listed date, thereby marking many of these inventions as dependent upon, augmented to and/or subverted by other technologies.

1964 transistor radios

1968 portable televisions

1975 video game consoles

1979 personal computers (PCs)

1980 play stations & home video theatres

1982 samplers, MIDI sequencers, SMPTE time code & laser discs (LDs)

1983 Dolby surround sound theatre units

1985 compact discs & Dolby surround sound home units

1988 PC sound cards

1989 internet

1992 Read-Only compact discs (CR-ROMs)

1993 plug-ins

1994 Quicktime movies (QTMs)

1995 mini discs (MDs)

1997 digital video discs (DVDs)

Dedicated to Zippy The Pinhead & Jeff Mills.

Text © Philip Brophy 1999. Images © Respective copyright holders